In other words, if the machine vision algorithm is confident that there is indeed a suspicious lung nodule present on these images but my report makes no positive mention of said nodule, the system would flag that as a discrepancy.

The first in a series of three presentations from Ferrum Health on the use of AI to impact population health, “AI in Oncology” provided Strategic Radiology members a provocative vision of a powerful new role for radiology in the health care enterprise from Elie Balesh, MD, private practice diagnostic and interventional radiologist and medical director of Ferrum Health.

“Most health systems and radiology groups in the US have at least considered using AI, but very few, probably less than 5 or 10%, have actually implemented any kind of nuts-and-bolts meaningful AI program,” noted Balesh. “While the appetite to test drive AI, potentially even pay for AI, exists across a large majority of these players, very few have put together the business plans, financial models, or earmarked budgets to actually implement AI technology and capitalize on its value.”

More than 1,200 companies are knocking on health system doors, reaching out to leadership and other stakeholders, trying get their discrete, short-term solutions purchased, installed, and deployed. “Very few AI vendors provide end-to-end, soup-to-nuts comprehensive clinical and operational workflow solutions, which is what is needed at the enterprise healthcare level,” he explained. “Each of these widgets and gadgets may have variable configuration requirements with scanner hardware, cloud-based post-processing software, and integration with PACS, RIS, or the EHR, and naturally may come with different licensing agreements and require servicing by different IT and cybersecurity teams.

These hurdles constitute what Balesh referred to as “last mile problem.” Enterprise healthcare requires an out-of-the-box platform distribution system to allow AI software to operate at scale and make the entire user experience frictionless.

Spoiler alert: that is precisely what Ferrum Health aims to do, and Dr. Balesh proceeded to describe how the company’s platform can improve outcomes in oncologic care. “When you look at lung cancer in particular, more than 90% of lung cancer patients will experience a 6-month delay in diagnosis or treatment,” he noted, identifying six points in the oncologic journey where care gaps occur:

“This can happen at the top of the funnel with eligible population screening gaps or post-imaging with a missed finding or missed follow-up examinations ,” he explained.

The Quality Workflow

Dr. Balesh identified two common workflow formats that typically characterize AI implementations: the one most familiar to radiologists is CAD at the point-of-care, which involves an algorithm highlighting suspected abnormalities. The other workflow is what Ferrum Health calls the “quality workflow.”

“In this model, the algorithm is invisible to me as the radiologist,” Dr. Balesh began. “I’m going about my day, patients are coming in, getting their examinations, I pull them off of the worklist, I review them, I dictate and sign the report, and I move on. This is where the algorithm starts getting to work in the background to double-check my work.“

The Quality workflow requires a dyad of a machine vision algorithm with a natural language processing algorithm. As the radiologist works through his or her queue, the vision algorithm analyzes the DICOM images for one or more particular pathologic findings, and the language algorithm parses the text of a dictated report to understand:

“In other words, if the machine vision algorithm is confident that there is indeed a suspicious lung nodule present on these images but my report makes no positive mention of said nodule, the system would flag that as a discrepancy,” he explained.

Righting the Ship of Care

The Ferrum platform collates discrepant examinations for secondary review by a qualified reader, usually in a separate session. Some use cases may be time sensitive, however, and flagged for immediate review by the same reader. If a true miss is identified, the original interpreting radiologist is notified, who in turn may issue an addendum and communication to the referring physician or even the patient, to ensure appropriate clinical management.

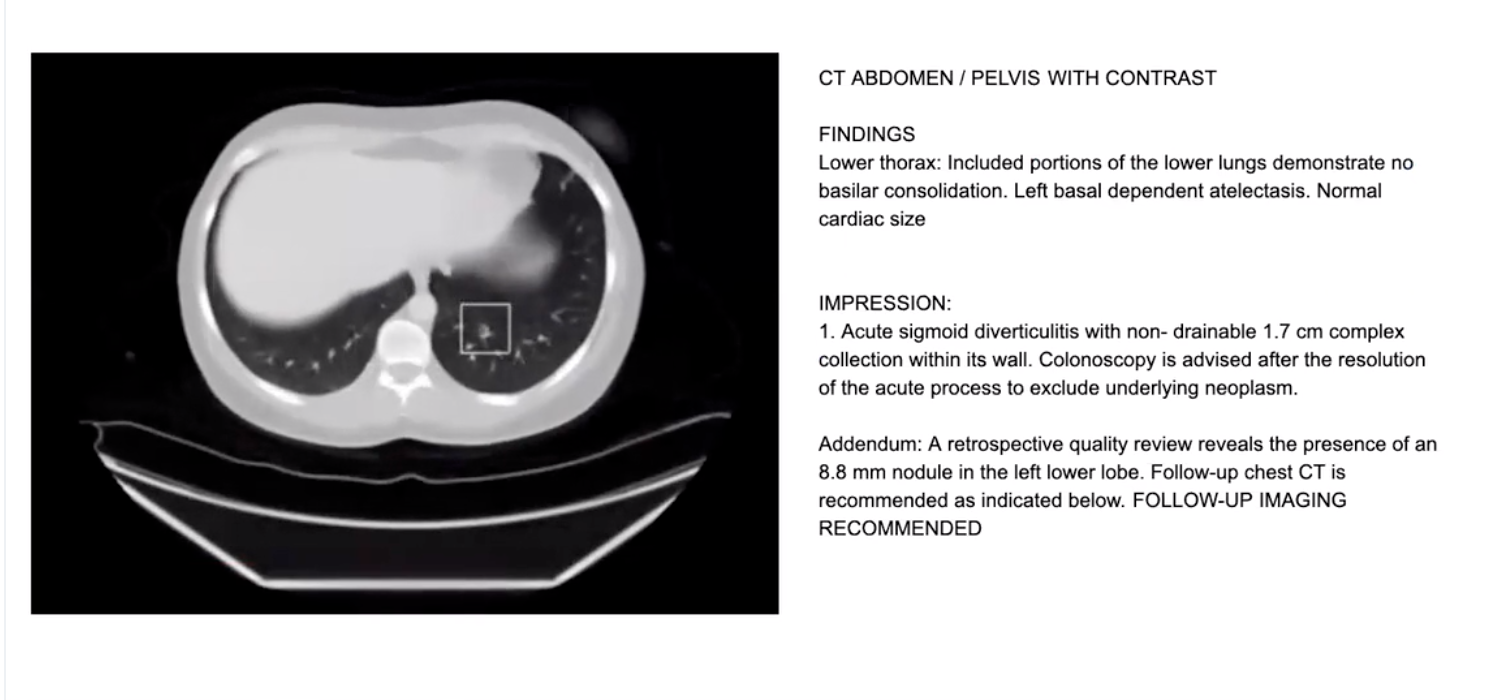

The Ferrum Health platform has successfully deployed an algorithm to identify pulmonary nodules with a square bounding box. The original reader of the CT Abdomen/Pelvis below had not identified the pulmonary nodule, but the algorithm did, and a reviewer issued an addendum.

“We flagged this examination at one of our clinical partner sites for review, and they issued an addendum,” Balesh said. “Importantly, this case was being performed as an abdominal CT, it was an incidental finding for a patient with abdominal pain that had acute diverticulitis. The nodule was an incidentaloma, and we want to make sure these patients don’t fall through the cracks.”

Balesh shared other examples, including a CTA PE performed for COVID pneumonia on which the vision algorithm found a missed 12 mm solid rounded nodule in an easy-to-miss blind spot, partially obscured by the hilar vessels. “We have many of these examples coming out of our database,” he said. “Sometimes we catch frank malignancies, spiculated nodules or masses which then go on for PET-CT, biopsy, or resection, which were simply not documented for any number of possible reasons. In those scenarios, the Ferrum system provides tremendous value.”

Performing at Level of Big Data

In moving on to the bigger picture, Dr. Balesh emphasized the potential to impact outcomes on a large scale. “What I’ve shown you thus far is a handful of anecdotes,” he said. “What I’d like to convince you of is the real-world evidence that we are performing at the big data level at our large health care sites.”

In an early small study performed in Asia, the lung nodule visual algorithm was deployed both retrospectively and prospectively on 4,900 sequential CTS that contained any amount of lung parenchymal—chest CTs, CTs of abdomen that included lung bases, CT of neck that included lung apices, cardiac CT that included the surrounding lung. The performance of the algorithm was compared with the performance of the radiologists in a typical quality assurance analysis.

Of the 4,900 input studies, 450 had a potential discrepancy, winnowed down to 104 likely true discrepancies after a quality review. They were submitted to a third-party radiologist arbitrator, who required six hours to sort through the 104 cases, 50 of which were confirmed to contain undocumented pulmonary nodules, seven of which were classified as clinically significant. Addenda were issued: some went on for imaging follow up, some went for biopsy;,some went on for resection.

A larger study conducted in Northern California with partner Sutter Health included 30,000 studies that were filtered down to 1,137 based on findings of dyad algorithm. Of those studies thought to be discrepant, 200 were classified as near misses, 118 deemed clinically significant, suggesting they may alter downstream management; 57 (48.3%) were found on CT abdomen pelvis while 34 (28.8%) were found on CT chest.

“Our original hypothesis was that the majority were going to be incidental findings for CT abdomen, neck, or cardiac, but up to a third turned out to be on CT chest,” Balesh reported. “Every radiologist knows to always make sure to look for nodules on chest CT—but high workloads, fatigue, and distractions can hinder performance.”

Balesh rested his case for AI, suggesting that the quality working promoted by Ferrum Health is easy to justify on the business, medico-legal, and clinical fronts. “The take-home message here is that as we become overwhelmed with volume, as our speed continues to increase just to keep up with the daily demands of utilization, AI has a highly valuable potential role to play as a second reader, as a safety net.”

Ferrum Health is taking this approach outside of the lungs and into other organs, working with vendors to develop analogous algorithms that can identify missed liver, renal, pancreatic, adnexal, and osseous lesions in the oncologic imaging, as well as algorithms that can find many other clinically significant imaging findings.

“We are working on missed pulmonary emboli, intracranial hemorrhage, signs of early ischemic stroke on CT, aneurysms, and fractures,” he said. “As our vendor partners grow in number, capability, and most importantly performance, we are able to host these on the Ferrum platform and deploy a comprehensive solution at scale across enterprise health systems to really move the needle.”

Mark your calendars for the remaining two sessions in the Ferrum Health–SR Clinical AI Education Series:

AI in Vascular Care, Thursday, August 18, at 5 PM Pacific Register

AI in Breast Care, Thursday, October 6, at 5 PM Pacific Register

Hub is the monthly newsletter published for the membership of Strategic Radiology practices. It includes coalition and practice news as well as news and commentary of interest to radiology professionals.

If you want to know more about Strategic Radiology, you are invited to subscribe to our monthly newsletter. Your email will not be shared.